In the previous two sessions of this blog series we’ve created an AWS instance and used Ansible to install a single Openshift OKD node. As scalability is one of the key features of cloud, we’ll show you how to add another AWS instance to your cluster. To achieve this, we’ll use Ansible to expand the Kubernetes cluster, without any downtime for your pods.

In this blog post Jan van Zoggel and I focused on installing an all-in-one OKD cluster on a single AWS instance and expanding the cluster with a second AWS instance and manually expanding the cluster with a second AWS instance. This scenario was used for the Terra10 playground environment and this guide should be used as such.

In the previous two blogposts we’ve looked at installing Openshift on a single AWS instance, which is great to get started with Openshift. But what if your developers get excited and start launching lots of Pods? You might hit some performance issues, as this node is also used for the ‘Master’ role which includes hosting the Web console and API’s. Additionally, your master node runs Prometheus and Grafana monitoring out of the box. This is great to get some performance insights but unfortunately those components grab a fair amount of memory.

In this final blog post, we’ll show you how to expand the cluster with a dedicated ‘compute’ node using an additional AWS instance. Compute nodes are designed to take care of the custom pods your developers create, so you can dedicate your resources on those. By adding multiple compute nodes, you can even ‘evacuate’ Pods from one node to the other in case of maintenance or issues. For now we’ll just focus on one compute node.

To add a second AWS instance, log in to AWS console, hit the ‘launch instance’ button and select Centos 7 from the marketplace. For the instance size the requirements dictate you should have at least 8GB for the compute node, so t3.large would be fine. For the remainder of the wizard you can use the same settings as described in part 1, but be sure to select the same subnet from the dropdown. Also you need to be certain that you’re adding a second disk for Docker. If you’ve added all the ports in the security group as described in part 1, you can select it from the dropdown during the wizard.

Once you have finished the wizard, it shouldn’t take long for the second AWS instance to get a public IP address. Use your AWS key to log in with SSH as we’re now going to preconfigure the host. You could of course re-run all the steps described in part 1 of the blog series, but to make life a little easier, you can also use a script I’ve prepared to run all the preparation steps on Linux:

sudo yum install git git clone https://github.com/terra10/prepareOKDNode.git cd prepareOKDNode ./prepareOKDNode.sh

The script installs all packages, configures docker and installs the EPEL release. You’ll find the logfile in /tmp containing all steps. If you hit an error, please type ‘export DEBUG=TRUE’ to print additional logging information.

To grant access from the masternode, please log in to both instances with your AWS key and add the contents of id_rsa.pub from the masternode to the file ‘authorized_keys’ on the newly created node. This step is essential, as Ansible needs to access all hosts in the cluster without prompting for passwords. Also, note the internal AWS hostname for your newly created instance by typing:

hostname

in the SSH session of your new host. You’ll see something like

ip-172-38-10-129.eu-west-3.compute.internal

This is the internal hostname that we can add to our inventory file. Head on over to your master node using SSH and add the following at the end of your inventory file:

[new_nodes] ip-172-38-10-129.eu-west-3.compute.internal openshift_node_group_name='node-config-compute' '

Once you’ve added the new node to the inventory file, run the following Ansible playbook:

cd ~/openshift-ansible ansible-playbook ./playbooks/openshift-node/scaleup.yaml

You’ll see that Ansible tries to connect to the second AWS instance and after that several basic pods (for monitoring, the Software Defined Network and some other infra components) are installed onto the new node. When the playbook is done, enter the following on the master node:

oc get nodes

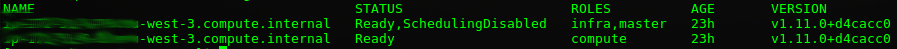

The output should provide you with a list of two nodes, both having the status ‘Ready’. Also, notice that the defined role for the second node is now ‘compute’

As both nodes are now schedulable, your pods will run on either the first or second node. To find out which pods are running on your master node, run

oc describe node <masternode>

Here you’ll see a lot of information, but two things are noticeable here. First, you’ll see that this node is considered ‘schedulable’, which means that new pods are allowed to run on this node. Secondly, you’ll get an overview of all the pods running on your master node. Beside all the default and Openshift pods, you might find some other pods which belong to your developers. If you’ve used part two of this blog series to get here, you’ll find the beerdecision pod on the list.

Of course you want your developers running their pods on the new compute node only, so we’ll have to prevent new pods to hit your master node. Additionally you need to evacuate the pods from the master. Lets start with this first step, by marking our master node as unschedulable:

oc adm manage-node <masternode> --schedulable=false

That’s it! By setting the ‘schedulable=false’, the replication controller knows to avoid this node. You can verify this by hitting the ‘oc get nodes’ command again, which will show you the new status for the mater node.

Now we’re going to the existing pods around to the new compute node. Lets assume you’ve set up the project described in part 2, simply switch to this project by typing:

oc projects t10-demo

now, see which label is attached to our beerdecision pod:

oc get pods --show-labels

Your output will look something like this:

[centos@brewery ~]$ oc get pods --show-labels NAME READY STATUS RESTARTS AGE LABELS beerdecision-1-build 0/1 Completed 0 17h openshift.io/build.name=beerdecision-1 beerdecision-1-wtvr6 1/1 Running 0 17h deployment=beerdecision-1,deploymentconfig=beerdecision,name=beerdecision

You can see our running beerpod has the label ‘name=beerdecision’ among other labels. Let’s move this pod to the other node!

oc adm manage-node <masternode> --evacuate --pod-selector=name=beerdecision

And there we go! Your pod is created onto another available node (which in our case can only be the compute node) and it’s removed from the master node. Because Kubernetes uses a Software Defined Network, you don’t need to reconfigure anything on the network level. All pod traffic is handled by the Openshift Router pod.

As you might notice, our master node still has the ‘compute’ role when we type ‘oc get nodes’. To remove this compute role, simply remove the label from the node

oc label node <masternode> node-role.kubernetes.io/compute-

The minus at the end tells Kubernetes to remove the entire label. When you now enter ‘oc get nodes’, you no longer see the compute role defined at your masternode.

And that’s it! All your new pods will be scheduled on the new Openshift node we created during this blog. We’ve also shown how to move your existing beerpod to the new node without downtime. Although this is just a small brewery environment with a limited amount of beer(pods), we’ve tried to demonstrate the scalability of Openshift on the cloud. Using these basic concepts, you can serve as much beers as needed!